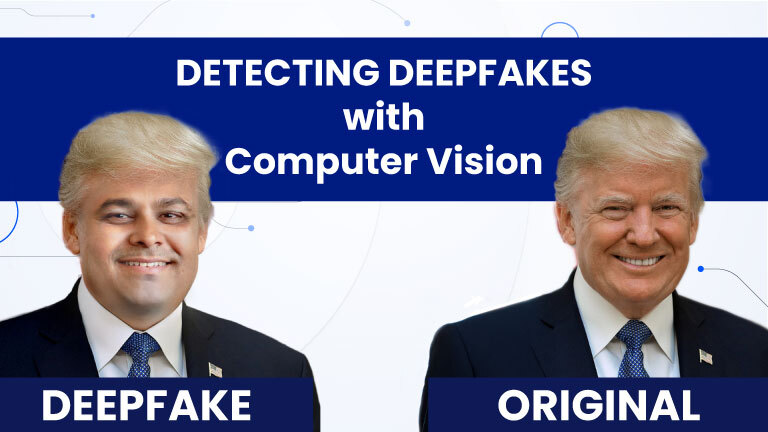

Despite the numerous positive effects that AI brings to today’s world, we face unprecedented forms of cyberattacks. One of the advanced cyber attacks created by abusing AI is deepfake. A deepfake, a newly emerging AI-driven attack, is an artificial image or video generated by a specialized machine learning model called “deep” learning. Deepfakes are created for various uses, such as impersonation scams, bypassing security systems, and disinformation attacks.

Since AI has become so accessible and at a rapid rate, it is important to acknowledge the repercussions made by the people who use AI for harmful intent. More and more people are creating deepfakes out of curiosity, as the process itself isn’t too demanding; people with no basic knowledge of how deep machine learning works can now create deepfakes, a simple yet effective deceiving tool.

Three major uses for deepfakes talked about earlier are:

1. Impersonation scams

- Impersonation scams occur when attackers create fake audio or video of a trusted person, someone who is close to the victim or the victim’s boss. The reason why an attacker might trick one into believing that he/she is talking to their boss is to trick their “employees” into sending money or sensitive data. Similar to this, attackers would also create a video or an audio message that impersonates someone that the victim trusts, and gain sensitive information from him/her.

- The reason why so many people fall for these impersonated video or audio messages iis that people in busy society nowadays simply can’t care and bother too much to question, and people trust familiar voices/faces, hence deepfakes are more convincing than normal phishing.

2. Using deepfakes to bypass security measures

- Attackers realized that they could utilize deepfake technologies to trick and bypass security systems, especially 2FA biometric authentication systems that were known for giving difficult times for attackers before. The use of deepfakes here is to gain unauthorized access to accounts or secure systems, and since biometrics were known to be reliable and hard-to-crack for attackers, it has been utilized by multiple users. However, now, deepfakes pose even greater security issues as it presents attackers with a whole new way to trick authentication systems that couldn’t have been so easily cracked before.

3. Disinformation attacks

- Disinformation attacks aren’t particularly focused on one person; rather, it is created by individuals who seek delight in stirring up conflicts by creating fake videos of politicians or leaders saying harmful or false things. These individuals act more as a “troll” in our society, creating panic or confusion across media, and falsely created videos can actually influence electricians or damage reputations, even for a little bit. Last but not least, disinformation is used to destabilize organizations or even countries by creating mass confusion between different parties.

In response to deepfakes and their rising concerns, some organizations have been putting efforts into creating a tool that would identify deepfakes. However, these tools are often not free for all users and aren’t fully accurate.

Deepfakes are heavily dependent on the data it has been fed, meaning that the more images available of the figure that the attacker is trying to impersonate, AI would have an easier time replicating facial and verbal features. Consequently, deepfakes used by attackers could only impersonate widely known public figures, one that has a lot of media around him/her, and attackers used to have only so many qualified examples to fool others.

Nonetheless, this is proven to be no longer the case as modern AI models are way more advanced; they can learn patterns from very small samples. Modern AI already understands human/faces/voices in general, meaning that unlike in previous times where an individual would have to put massive amounts of data from scratch, now many more with less understanding of consequences can create more advanced deepfakes that can virtually impersonate anyone with some dedication.

(https://deepstrike.io/blog/deepfake-statistics-2025)

The reason regular people are experiencing more and more personalized deepfake attacks is that attackers now don’t require a famous target to start creating deepfakes, and even a short video clip from social media or a call can be enough. Deepfakes used to be data-heavy and limited to the public, whereas now they are light in data, making them much more dangerous in everyday cyberattacks.

By. Geumchang An

Works Cited

“Deepfakes.” University of Virginia, Information Security, https://security.virginia.edu/deepfakes.

Accessed 29 Mar. 2026

Naffi, Nadia. “Deepfakes and the Crisis of Knowing.” UNESCO, 1 Oct. 2025,

https://www.unesco.org/en/articles/deepfakes-and-crisis-knowing. Accessed 29 Mar.

2026